January 15, 2021

The risk misinformation poses to your organization

GUESTS

Amil Khan,

Founder and Director,

Valent Projects

Paul Quigley,

CEO and Co-Founder,

NewsWhip

Topics

During the 10th episode of the Pulse, NewsWhip founder and CEO Paul Quigley was joined by Amil Khan to comment on misinformation versus disinformation, explain why some brands are more successful at confronting misinformation, and discuss campaigns reestablishing common ground.

Guest

Valent Projects founder and director Amil Khan has a passion for creating positive social impact and combating disinformation. Amil has advised governments on addressing disinformation narratives and a wealth of first-hand journalism experience as a former Reuters foreign correspondent and BBC investigative reporter. You can follow Amil on Twitter at @Londonstani.

Note: This transcript has been modified for clarity and brevity.

Paul Quigley: [begins at 00:21] As always, our motto on the Pulse is “speed to insight.” So the next half hour, we want to give you some new insights and understanding in what’s become a critical area of media and communications, and that is misinformation. NewsWhip’s got a little background here. Since early 2017, we’ve been proud to partner with various initiatives, including charities, universities, researchers, NGOs, and agencies that are smartly tackling the spread of misinformation in different ways. Misinformation is top of many of our minds after the U.S. Capitol was invaded last week by what seemed like a YouTube comment thread come alive with all of the derangement and people, I suppose, almost deranged by political misinformation.

While we got to know this world a bit since 2017, Amil has considerably deeper experience and pedigree and frontline experience at working with it. Valent Projects focuses on social impact and on combating disinformation, and also Amil worked at the U.K. Cabinet Office’s initial assessment of emerging disinformation practices, I think way back as far as 2013. He’s worked on issues including 5G conspiracies in the U.K., alt-right communities in Europe, and state-led disinformation in Africa. And prior to all of that spent 10 years as Reuters’ correspondent in the Middle East and Africa. So you’ve got a lot of experience with this world of misinformation and disinformation, Amil. Could you give us a background in how you came into this world and what you’ve been doing in it?

Amil Khan: Thanks very much, Paul. So I started working on this, I started actually as a journalist right after I graduated, and I graduated around the time just before the 9/11 events and the Gulf War. So my initiation into the world of information and international politics was through the lens of what was happening in the Middle East at the time, which was the major international issue that the whole world was looking at. And I felt very early on that there was a shift that was happening that young correspondents to people of my age, young other journalists could feel, which was that the organizations that we worked for – the organizations that we store as the things to aim for, Reuters, BBC, NBC, all these things – were being challenged as the “arbiters of the truth.” You could see that if you were in Iraq with insurgents producing videos, the whole thing about AQ and this apocryphal story of Osama Bin Laden making VHS videos and then sending it to Al-Jazeera.

The theme that I couldn’t help noticing, that was really just smacking us in the face, was that there’s this idea of the mainstream, which takes its information from the BBC if you’re British, or international, NBC, CNN, state broadcasters. But there are increasingly alternative routes to get to audiences, whether they are online, internet forums, new satellite channels, and it’s been going since I’ve started working. From there, my career was basically a fascination with that, with what’s happening, the bad and the good. Because yes, you mentioned the top news item right now, which is bad actors, but there’s also an opportunity there for good actors.That’s what really fascinated me. I didn’t think it was necessarily a bad thing that these guys were being challenged, but unfortunately we end up looking at the bad stuff most of the time.

Paul: Maybe just for people who may not know the difference between disinformation and misinformation, could you talk about which you saw as more prevalent back then?

Amil: I think both of them have jostled and borrowed from each other… So I should clarify, I should define. Both are false information at heart. Disinformation is when an actor is doing it purposefully and knows the information is false, but is seeking to get some sort of benefit out of it. Can be a government, there can be criminality and all sorts of stuff. Misinformation is when people believe it. So they’re putting out false information, but they vehemently believe that this information is true. It sounds like a subtle difference, but the infrastructure around how both are done then become very different. So if it’s misinformation, it’s people basically being duped. If it’s disinformation, we see kind of an industrial process of churning stuff out and seeing what works.

If we look at the issue with the anti-vax and coronavirus stuff, there was both, and often you’ll find that there’s both. So there were some state-led efforts to persuade people in particular countries that either China wasn’t responsible if it was Chinese, or Russia saying that actually 5G is responsible, which are linked to what their state’s or foreign policy aims are, trying to just disrupt or protect themselves. But obviously those were taken by some communities who believed them and pushed them out and sort of amplified them, completely believing whatever they were being told was true. So you’d end up with the same issue, the same theme, but both of those phenomenon happening at the same time.

Paul: I really want to get into the difference in dynamics and structures between those. But before that, Amil, it would be worth maybe walking us through how you moved on from journalism into dealing more fully with these problems.

Amil: I left journalism after feeling that the world was changing, and I left around 2008 / 2009. I’d spent quite a bit of time doing all the kind of journalism I wanted to do. I did Reuters as you mentioned, I also did investigative documentaries in the BBC. I felt that if you really wanted to affect how people were seeing information and seeing things around the world and that whole thing that journalists want to do, which is bear witness to what’s happening and shine light in dark corners, I just felt that wasn’t happening. And I was just about the right age where I thought, “I don’t have to just do this, even though I don’t think it’s going anywhere. I’m still of the age where I can try something different.”

I sort of went to charities and human rights organizations, movements, activists, people working on the kind of things that I was interested in, who I used to see as a journalist and just sort of look at them and go, “Why can’t you just be better at this? And you’re getting totally gazumped by the bad guys.”

So I thought let me put my money where my mouth is, just go and offer myself to these guys, and say, “I can help you with this stuff. Let’s work together.”

After a little while of that, the U.K. Foreign Office approached me and said the Syrian body that is the international alternative to the regime, activists basically, who came together and said, “We’re the autonomous alternative to the guy who’s using chemical weapons on people,” need a political communications support. So I said, “Great, that sounds like the perfect job for me.”

All these guys were people who came from backgrounds being in jail for 20 years for advocating for human rights, sort of different groups, women’s rights, anti-violence, all sorts of things. Just the kind of work I wanted to get into. And it’s pretty much six or seven months after getting into that work, we started seeing essentially Russian disinformation used in Crimea being used for the first time outside of that immediate area of influence. The Russian government’s area of influence, in Syria as a response, particularly to chemical weapons, but generally the white helmets, the civil defense. I’d see the Facebook pages, I would see what was going on on Russia Today. I would constantly be saying, “This is an issue. This is not going to just stay in Syria. This is having a massive impact on audiences in the U.K.”

The first time it really came into our world and realized that it was going to impact them was the vote in the U.K. Parliament on, I think it was at the time, the idea that the U.K. would go and take part in the military response to the use of chemical weapons. I was seeing a very concerted effort to directly influence U.K. and American audiences to take a stand against that and by portraying it as a regime change or whatever. I just thought that this needs to be recorded nicely. This is something that we have to look into. I ended up doing research into what it was, looking at the methodologies, and the impact that it could have in the future.

Paul: And has something changed in the last decade, in our information environment, to make us more vulnerable in societies to misinformation?

Amil: Really good question. I think a lot of people grapple it from different angles. There’s an argument that essentially we don’t, that nothing’s changed, that human beings have always been susceptible to believing things that they want to believe, which is true. There’s another line of argument, which also says, “That might well be true, but the infrastructure of information has clearly changed.”

That is a new thing. So before, there was some great work done on looking at how the Soviet Union used to spread kind of disinformation across the world, and it was a laborious matter. I mean, if you’ve seen some of the great New York Times coverage of it, you’ll see that they talk about planting something in an African newspaper, and five months later it might get picked up and end up somewhere else.

Also it’s long, it’s expensive, and it’s a difficult thing to do. The social media infrastructure that we have now has, I think just made it faster, slicker, easier to test. Not only in terms of publishing, but also in terms of testing all that sort of Facebook ad A/B-testing capabilities built into this huge platform, lets you put out two lies and see which one really gets people going. And not only the actual lie, but what kind of images in the first three seconds of a video really get people going. And if you are really organized about it – some people have been, not just government, but alt-right movements, anti-vaxxers – you can use that in a way that was really just not possible in a previous age.

Paul: So A/B-testing, access to communities, multi-polar channels, all just make it far easier to optimize and start hijacking and developing stories. When people are thinking about misinformation today, do you see any areas that you think should be understood better? Because currently I think the narrative is you see a lot of criticism of Facebook and a kind of a push towards more intensive moderation as the answer. With what’s happened with the banning of President Trump’s Twitter account, it looks like a win. It’s not clear long-term if it’s a win, I think, but certainly it seems called for and the right response in that situation. But is putting all of this to the platforms and calling this a moderation problem the right solution, or are we thinking about it wrong?

Amil: I think there’s a comfort level people feel in saying this is a really big problem that can be solved by shining a light on the information. I’ve heard the phrase, “sunlight’s the best disinfectant.” The actual research, when you actually get into it, shows that actually it’s not. It’s the opposite, but it’s a comfortable place for us to be because traditional views of how we deal with information as human beings is actually ingrained in how we see democracy, for example, and how we see us interacting with each other, which is if you put something wrong out there, it’ll be challenged. So the way to go is much more information… And it all sort of self-balances itself in the end.

I think a more uncomfortable reality that we need to deal with is that human beings tend to make decisions on an emotional basis rather than a rational basis. And there’s more and more academic work looking at this now. Fact-checkers, for example, do a great job. It is based on the idea that we, as rational people, see something as proved to be wrong and we’ll say, “Right that’s it,” and we’ll discard it. What the problem is, that the research shows, that all that really does is point out to people something that they want to believe to be true anyway. So they’ll discard the fact checking. All they’ll fix on is the fact that that information is out there. So I think that is something that is on the policymaker level. You often hear MPs talking about it, and I think it’s a bit slow, the academic work on this and the stuff that specialists talk about, the stuff you’ll see in think-tanks. That hasn’t quite reached the sort of level of people who make our laws, unfortunately.

Paul: It seems like a real conundrum because we’re starting to recognize how irrational people are and a lot of psychology books many of us may have read in recent years show how we’re struggling at the best of times to generate any amount of impartiality in our minds, especially when it comes to questions of our identities and political things. The closer you get to that, the harder it is to respond rationally, I think. And so maybe you can’t drown out the bad arguments or good arguments because people aren’t reasoning. Does that mean that moderation is the way, or are there other paths for dealing with the problem?

Amil: I think there’s multiple ways. And different organizations have different roles to play. So there is clearly a legal route. But if you’re an organization, I think the key thing that you can really do right now is think about how you approach communication. The organizations that are older, larger, hierarchical, we could use the label sort of “analog organizations,” organizations born in the analog era. They have a different relationship to information. That relationship is that they, especially if they’re government-linked or they’re linked to a really important person or somebody or a tragedy that’s been here a long time, they’ll assume that they have legitimacy and credibility just because of who they are. And when they engage with an audience, they will give out information as and when they think it’s necessary to give out information.

Whereas if you see a newer, younger organization that came about in the last few years and its DNA is the digital age, they tend to understand that credibility and legitimacy is earned on a daily level, on a daily basis. And that the credibility is borrowed from the audience. You don’t own it intrinsically. The audience has to decide to give it to you and therefore the way they interact, the way they put out their communications, when you talk. Content marketing is based on the idea that it’s constantly giving value and engaging and showing people who you are, and proving and demonstrating. What I have seen in our work, when organizations sort of behave like that to begin with, they are much better at handling disinformation because they’re less targetable.

It’s much easier to target an organization that will not put out a lot of information, that is kind of quiet and wants to hold everything back. Because you can target them, and you can very well know that they’re not going to respond for a week or two weeks. You can get a lot of stuff out there in that time. It’ll go to a board. They’ll make a decision. They’ll maybe do something or not do something. Whereas if a more nimble organization has already built and is constantly kind of refining and pushing out this idea, “This is what we are. This is what we do. And if we mess up, we’ll own it, and we’ll try and rectify it.” They’re just harder to do disinformation against.

Paul: That’s a fascinating insight. Straight away, an ongoing proactive degree of communication and trust building that’s happening every day builds the right position in the audience minds and also maybe the right organizational habit for responding to things when they happen. So that’s happening on both sides, inside and outside the organization. An immune system, I suppose, because we talk about immune systems a lot lately, is being developed internally for dealing with things.

Amil: Exactly.

Paul: Very good. You can imagine an organization like Big Pharma company A, Big Pharma company B, both are subject to various disinformation campaigns, especially if they produce a drug that’s in a sensitive area, such as the vaccinations are today. And one of them is much more proactive in terms of engagement. They’re straightaway going to be less vulnerable to audience perception, and be in a better position to talk back.

However, what if they’re just subject to all-out false claims? And for the parallel with the US Capitol, we’ve got the problem in the U.S. of people in very different realities in terms of what they believe to be true about the election and the huge challenge of reestablishing a common reality. Should you be drawing a line around some audience and saying, “Okay, we can’t reach these guys. We give up. Here’s this group who we can reach.” Or do you try and reach everyone with a message and convince those who look very difficult to be convinced?

Amil: I think both need to be done, but you look at the timeframe differently. So there’s tactical and there’s strategic. So if you’re in crisis-mode… a lot of large organizations in the last few years have been targeted and will be targeted more after the pandemic. We’ve seen Iranian, Chinese, also just inter-company competition. Disinformation, one of the tools and a major one that people can use against each other, has gone from politics to comments. In that scenario, it’s yes, transparency. Also reacting quickly. Understanding the audiences. Is it noise? We’ve done work where somebody says, “Oh, we’re being attacked.” All this false stuff is going out there. And we’ve noticed when we’ve looked at it that it’s four or five, basically four or five groups that are running hundreds of accounts. Facebook calls that coordinated inauthentic behavior. I know you guys have seen that stuff and it’s designed to make the organization panic. It’s a kind of almost old school way of doing it using new tools. Of course, the comms department, or the person in charge just sees this barrage of false information and just thinks, “Oh my God, what do we do? And we have to respond to every single one.”

In fact, it’s not really an organic attack. It’s not something that they need to put that resource into because they can’t see that it is actually manufactured and not authentic. So we see a lot of stuff like that, and companies can quickly triage and just say, “Right, where are we at? What is the problem? Let’s put resources into the right places.”

That kind of thing that can happen when you’re doing crisis-mode. But if you’re looking at long term issues, such as politics and the spread of alt-right fantasyland thinking or sort of the anti-vaxxer stuff and pharma, those are issues that can be addressed, but they have to be addressed over time. And the key thing in them is understanding the key emotional drivers that people have. We have seen really, really amazing attempts at dealing with these issues on a long-term basis. I remember one of the most successful was a campaign in the U.S. to pass the marriage equality law. And this is a tough thing to do because they had to pass it through states that were highly conservative and religious, and in an election.

This is not something that electioneers have a lot of experience in because they tend to just focus on people who already agree with them. So actually changing people’s views on a contentious issue is difficult, but what they did was they conducted research into these groups to find that point of commonality that they could build a campaign on and to challenge their own preconceptions about the audience. So they came in, a lot of the people doing the campaigning saw religious bigots and thought, “These people have this mindset.”

When they did the research, they found actually that they could find a positive place to interact with this audience. And it was about family. It was about their fears that families were being eroded or family values were being eroded. And that’s a point at which you can find something that you can say, “Yes, you’re worried about this, and we’re worried about this. We can address this.”

And you can build campaigns, and then you can start thinking about a lot of ways of engaging audiences. When it comes to Big Pharma, it can be issues around people’s fear about health and safety. The bad guys want to make the conflict worse. Conflict drives eyeballs. Even if it’s the misinformation stuff, just a YouTuber who wants to get lots of views or whatever, the tricky bit is getting beyond that initial instinctive, “Oh you’re that tribe and we’re that tribe, and you said this. That means we don’t like you,” and going beyond that to find out what is the commonality that you can actually build some sort of positive engagement around. It is possible, and it does happen.

Paul: I agree. We’ve seen that in Ireland with our last two constitutional referendums, one on marriage equality and the other on abortion. Those were both resolved I think with a lot of how to talk to your granny about why these two men want to get married and a lot of direct connection and demonstrating alignment of values. It’s such a tall order at the moment. We see misinformation as kind of a boogie man, but there’s a lot of ordinary people who are convinced of various things about 5G or vaccination. It will be very hard to convince many people in the U.S. to try and understand the emotional drivers and personal situations of those who invaded the Capitol last week, right? So this must be hard advice. How do you get people to accept that advice that there has to be some engagement to and understanding? It’s very difficult in a conflict to have any interest in understanding the other side’s position other than to say they’re bad.

Amil: We find it is hard. The reason why it’s hard, though, I think is a good way of describing this is that often people speak to us about these problems when they are really at crunch time. And that doesn’t give you a lot of scope to try and address it at a fundamental level. And so what we do in that scenario is we say, “Right, we can do some things that show you that there is space.” We can help alleviate your immediate risk or problem and give you an idea that there is more to be done later. So when you come out of this crisis, maybe you can start thinking about this in other ways and we’ll be happy to help.

If you are lucky enough, if we’re lucky enough to be asked about a problem, even six months out, a year out, then we can start talking about, and it’s normally often with people we’ve worked with before so we’ve done the crisis management at some point, and then we’ve come back and said, “Right, let’s look at this problem from a wider perspective.”

When we can do that and when we can get some research, ethnographic research particularly, on a problem, then when you explain it to people on a higher level, and you say, “We’ve got a theory of change here. We built a theory of change.” When we look at this stuff, we do it on the basis of best practice that either I’ve seen in government or we’ve seen in development areas or campaigns. People at Greenpeace, for example, do amazing campaigns.

If we can get a convincing theory of change, that’s based on research, we find that we get a much more receptive response from the people who are making the decisions. It becomes our job to really show that there is a potential to do something here. It’s like the famous saying that “we come to the right decision after we find all the wrong decisions.” So there is an element of that because people are comfortable, organizations are comfortable, in the mindset that “I believe this to be true so other people must believe it to be true.” And I’ve worked on elections so we definitely see that. It takes time, but we do get there.

Paul: It’s very interesting to see that this misinformation is in fact going to be a long-term communications issue, and it’s embedded entirely with wider, long-term thinking about communications and audience influence is kind of what you’re saying. I think that’s a very important thing to take home because maybe misinformation is kind of living with the crisis team, or it’s seen as something that’s an acute problem. If it’s fundamental to how the media pipes have changed in the last decade, it’s going to keep happening. The solutions are quite long-term too. There’s going to be a lot of work in the communications and media space in this, in the coming years, I think.

Amil: I think there’s an institutional culture issue. I remember speaking to somebody who was working on election campaigns in the U.S., both Barack Obama campaigns. The social media team said that people need to see that what you’re saying works and is true. And for them, it was raising money. So he describes this, I can just picture it, this scene in an election where everybody’s talking about how much money they’ve raised from donors. Traditionally it’s the donors, the big ticket donors, who always sit in the middle of the room because they raised the most money and everybody looks to them. Over these weekly meetings, the money raised by the guys on the fringes of the room, which is the social media small, individual donors, kept growing and growing and growing. Every time they went after results the more social media guys there would be. A few million, 10 million, 20 million, until there came a day when they said it was a hundred million or something, which was more than the big ticket donors where all the resource goes. The person who was explaining it to me said, “That day the room changed.” Those guys on the edge came into the middle.

That exemplified, for me, the kind of thinking that needs to happen in organizations. Of course, a good election team is much more flexible and dynamic than big organizations. It’s just easier, it comes together over a smaller period of time. Organizations that understand that and can adapt to that are going to survive. We’ve seen that, and I bet you’ve seen that in your work that you guys do around the pandemic, when people talk to us about the way things are changing and have changed and how they’re going to respond.

We just get the impression that those guys who can stop looking at information as this sort of a junk thing that on the side that somebody else can worry about and just tell you about is kind of marketing and just make the front of the shop look nice. And the guys who say, “No, this isn’t how we engage our different stakeholders on it, on a minute-by-minute basis, woven through all our functions. That’s how we’re going to prosper.” You can see they’re adapting. They’re going to do a lot of it.

Paul: The new media ecosystem demands and the organization’s relationship with the outside world may just demand to go into the future. Amil, this has been fascinating and I’ve broken my cardinal rule. I’ve allowed us to go over time, but I’m really grateful. So thank you for joining us today. This has been a fascinating conversation, and we’re going to be continuing a conversation on misinformation again in two weeks time, at the same time. We’re going to be joined by Marshall Manson of the Brunswick Group who represents and works with various organizations that also face misinformation threats. And we’re going to talk about his framework for addressing those as well. So, Amil, thank you again for joining us today.

View our on-demand webinars

Sign up to our next webinar

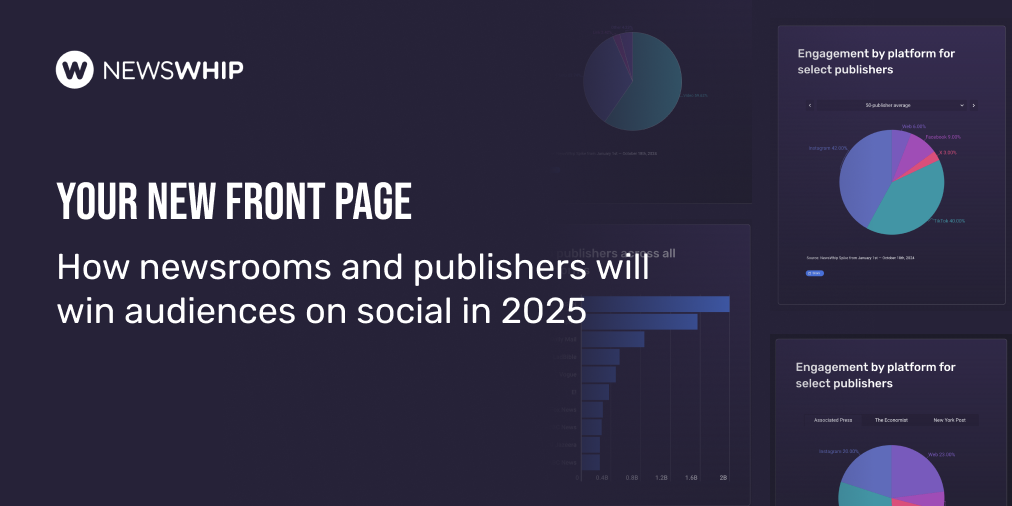

Lessons for brands from the frontlines of social media manipulation

Governments globally, particularly in conflict zones, confront the persistent threat of social media manipulation, as evidenced by ongoing information warfare in regions like Ukraine. However, there are dedicated organizations such as Valent Projects actively working...

When distrust defines society’s relationship with government & media

Humanity’s potential for progress and creativity risks sustained damage when distrust is the foundation and society is no longer characterized by positive relationships with government, media, business, and non-governmental organizations (NGOs). Still, data from this...